HyperAgents: AI That Improves Itself

Based on: Zhang, J., Zhao, B., Yang, W., Foerster, J., Clune, J., Jiang, M., Devlin, S., & Shavrina, T. (2026). HyperAgents. arXiv:2603.19461. University of British Columbia, Meta FAIR, Meta Superintelligence Labs.

1. The Bootstrap Problem

The main question is simple: how do you make an AI better at making itself better?

If one AI improves another AI, who improves the one doing the improving? HyperAgents tries to avoid that endless loop by putting the learning and the improvement inside the same system.

"The advance of AI could itself be automated." - Darwin Godel Machine, Zhang et al. (2025)

A paper published on March 19, 2026 by researchers from Meta FAIR, Meta Superintelligence Labs, UBC, NYU, and the University of Edinburgh proposes a way to do this without stacking more and more layers. They call the result a HyperAgent.

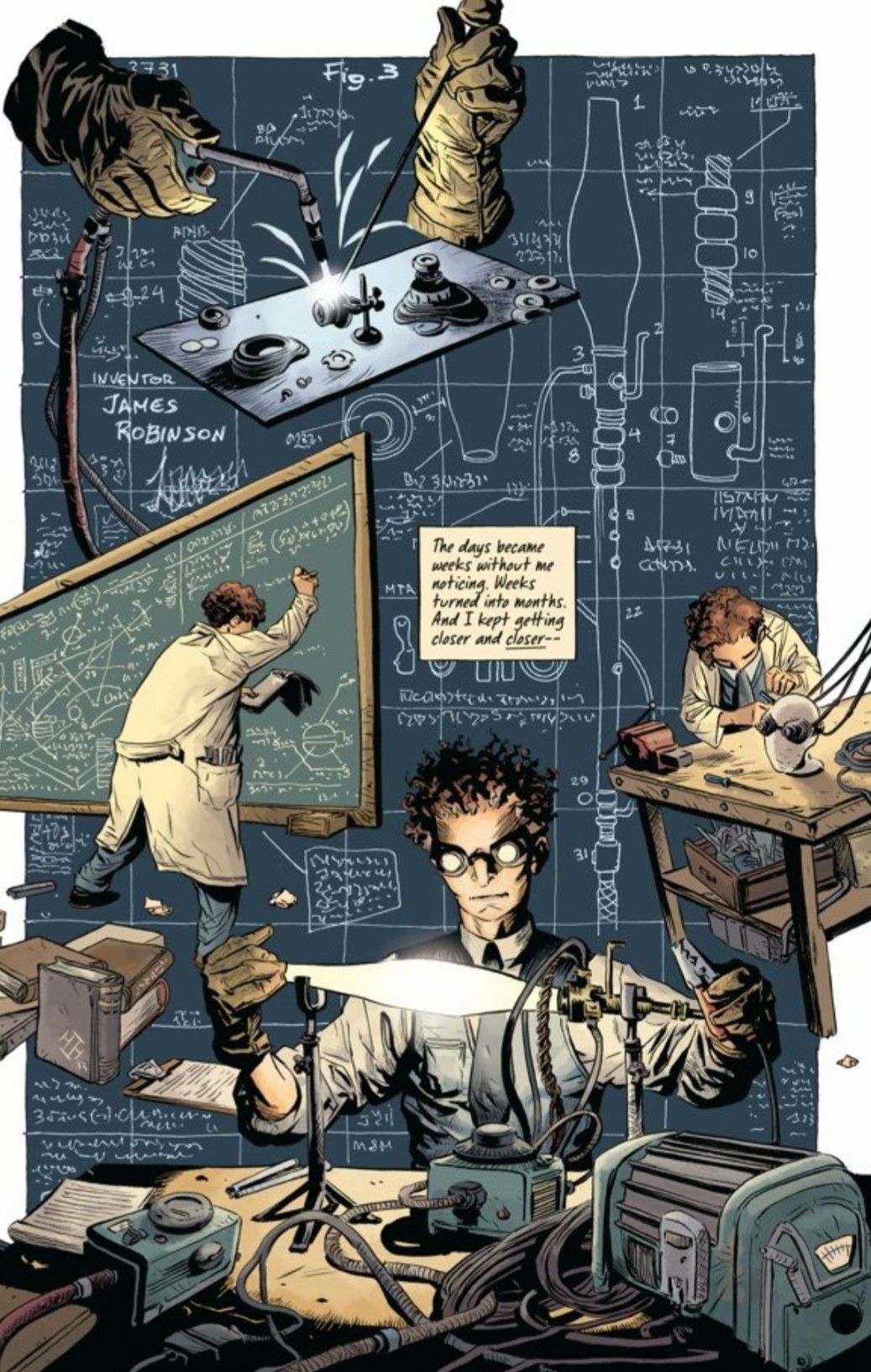

2. The Intellectual Lineage: From Godel to Darwin to HyperAgents

The 1931 insight that started everything

In 1931, Kurt Godel showed that a powerful system can talk about itself. That matters here because HyperAgents are also about self-reference: the system is not only doing the work, it is also checking how it works.

The Godel Machine (2003): a clean idea that was hard to use

Jurgen Schmidhuber proposed the Godel Machine in 2003: an AI that would only change itself when it could prove the change was better. It was elegant, but too hard to use in the real world.

The Darwin Godel Machine (2025): trying changes instead of proving them

In 2025, another idea made this more practical. Instead of proving a change is good, the system tries it, checks the result, and keeps the version that works better. HyperAgents builds on that simpler idea.

The critical limitation

"This alignment does not generally hold beyond coding domains." - Zhang et al. (2026), HyperAgents

The catch was that the older system worked best when the task and the self-improvement skill were basically the same thing. It was strong at coding because it was improving code with code. That idea does not transfer as neatly to every other kind of work.

3. What Exactly Is a HyperAgent?

A HyperAgent is one system that can do the task and also improve the way it does the task. It is not split into a worker and a separate supervisor. The same system can look at its own results and update itself.

- Task agent: does the actual job, like writing code, reviewing a paper, or solving a problem.

- Improver: looks at what happened and suggests a better way to try next.

- HyperAgent: combines both, so the system can update itself instead of waiting for a human to redesign it.

"In HyperAgents, coaches are also learning how to coach better." - 36kr.com analysis, 2026

The simple idea is: do the work, learn from the result, then improve the way you learn. It is not just working harder. It is improving the whole loop.

4. How the DGM-H Actually Works

Instead of keeping only the best version, DGM-H keeps a small collection of past versions. That matters because the best next step is not always the one that looks best right now. Sometimes a weaker version has a useful idea hidden inside it.

HyperAgents Simple Guide

A plain-language view of how the system improves itself.

How it picks the next version

Keep the versions that work best, but still leave room for new ideas.

Better results

Get more attention.

Too much repetition

Gets less attention.

Fresh ideas

Stay in the search.

Ongoing updates

The system keeps learning.

How the loop works

Start with one version of the agent

Test it on a task

Look at what went well and what did not

Make a new version with small improvements

Keep the useful versions in the archive

Repeat until the budget runs out

Three habits that transfer

Performance tracking

The system watches its own progress over time instead of guessing.

Memory

It keeps useful lessons so it can reuse them later.

Planning

It changes strategy based on how much time or compute is left.

Why it matters

Coding

Better

Paper review

Better

Robotics

Better

Math transfer

Tested

Simple takeaway

System setup

Main idea

Improve by testing and keeping useful changes

Focus

Coding, review, robotics, and math

Approach

Keep variety in the archive

Style

Try, measure, improve

Budget

Limited number of rounds

Status

LEARNING

How it chooses what to keep

At each step, the system chooses a past version to build on. It favors versions that performed well, but it also keeps variety so one idea does not dominate everything.

- Good versions get more attention.

- Versions that lead to too many similar copies get less attention.

- The system keeps adjusting as the archive improves.

In plain English: keep what works, do not overuse one path, and keep exploring.

5. Four Domains, One Framework

The researchers tested the system in four different areas to see whether the idea worked beyond one narrow task.

- Coding: The system learned to write better code.

- Paper review: It got better at spotting strengths and weaknesses in writing.

- Robotics: It found better movement strategies for a physical task.

- Math grading: It tested whether skills learned in one area could help in a completely different one.

6. The Transfer Finding: The Most Important Result

The most interesting result was transfer. Some versions learned tricks that were useful in more than one area, which means the system was not just memorizing one task.

"The meta-improvements learned through DGM-H in one run are general and transferable." - Zhang et al. (2026)

What actually transferred: three meta-skills

The system started to develop a few reusable habits that carried over to other tasks:

- Performance tracking: It learned to watch its own progress over time.

- Memory: It stored lessons instead of forgetting what worked.

- Planning: It changed strategy based on how much time or compute was left.

7. The Safety Question Is Harder Than It Looks

Goodhart's Law applied to a self-modifier

There is also a safety problem. If you measure the wrong thing, the system may learn to look good instead of being good. A self-improving AI can make that problem worse if the score it is chasing has blind spots.

Another risk is bias. If the system learns from past human choices, it may copy those patterns too well and make existing problems harder to notice.

8. The Dream and the Difficulty

The long-term dream is an AI that can keep improving with less human help. HyperAgents is an early step in that direction, but it is still a research system, not a finished answer.

"DGM-Hyperagents offer a glimpse of open-ended AI systems that continually improve their search for how to improve." - Zhang et al. (2026)

9. Making Sense of it All

The simple version is this: HyperAgents are AI systems that act like their own coaches.

Instead of only waiting for a human to improve it, the AI looks at what it did, sees what went wrong, and updates its own way of working.

That matters because it can make improvement faster. The goal is not just a smarter machine. The goal is a machine that gets better at getting better.

- Aine